The Library of the Future

I believe that the higher research institution library, as it is found today in most large research institutions, is an anachronism. It is a product and symbol of a time that was not digitized, and its purpose was to house end-products of research, mainly published literature, that was static. It is a state-of-the-art of a world of knowledge that was inherently non-digital.

Ironically, another anachronism on a university campus is the large computing facility generally known as High Performance Computing Center or something to that effect. This is in spite of such a facility personifying the very state-of-the-art of a world of knowledge that is increasingly digital. Yet its weakness is in the enormous amount of investment required in static or sunk resources (building, air-conditioning, hardware, power requirement) and in its lack of responsiveness to ever-fluctuating computing needs.

I believe these two institutions, the research library and the high performance computing center, can be combined into a service organization that can better serve the needs of today’s researcher.

A library has several functions. In my vision, the archival aspect of the library can continue to serve its role of providing access to paper papers (you know, the physical-only variety), books, journals and other historical material wherever physical access is valuable. Access to the published literature, however, will be increasingly provided through the computer and mobile devices as more and more publications will be digital-first or digital only. In fact, access to even the already existing, non-digital material may also be improved if such material is scanned and OCRed properly. Not only would its use and access increase because remote users would be able to see it over the web, it might also be amenable to text and data mining (TDM) for analysis of trends, sentiments and other aspects of published text.

But, back to the library of the future. In today’s changing environment, libraries are also trying to recast themselves as stewards of research data. To that extent, they are helping researchers write data management plans (DMP), and even provide data storage and management facilities. It is this function that I believe can be done better by the library of the future that will combine forces with the center for high performance computing of the future to act as an intermediary between the researcher and the backend cloud computing providers.

Actual computing services will be best provided by commercial cloud computing companies that will compete on merit and price. These commercial providers have excelled in the business of providing cloud computing resources, and the world’s biggest clouds are run by Amazon, Google, and Microsoft that, along with a host of other smaller competitors such as Digital Ocean and Linode, also provide cloud computing services as a business. They have the technology and the ability to provide the best services possible at the lowest price, and be more responsive than any academic or research organization could possibly be. That is partly because these commercial outfits have also managed to hire away the best researchers and technologists to go work for them.

The library of the future will have specialist staff who will have skills and experience in both librarian functions as well computing functions, and they may come from today’s library and computing center along with those from the offices of IP and IT. These experts will negotiate with the backend computing services providers on behalf of the researchers, and make it possible for the latter to get the services needed for their work. This will be the most important function of the library of the future: to serve as brokers for computing services on behalf of the researchers, ensuring that all locally pertinent legal, ethical and technical requirements of the project are met by the service providers at the best cost possible. The “cloud” will not be monolithic. It will be adaptive and responsive to local conditions. Researchers in Germany will follow German privacy/security laws and data standards, and the cloud computing service providers will offer services that hew to those local laws. If a particular dataset should not leave the boundaries of the country, the service provider will ensure that. On the other hand, if there is no such requirement, the service provider may be free to move the data around for the best possible price and performance.

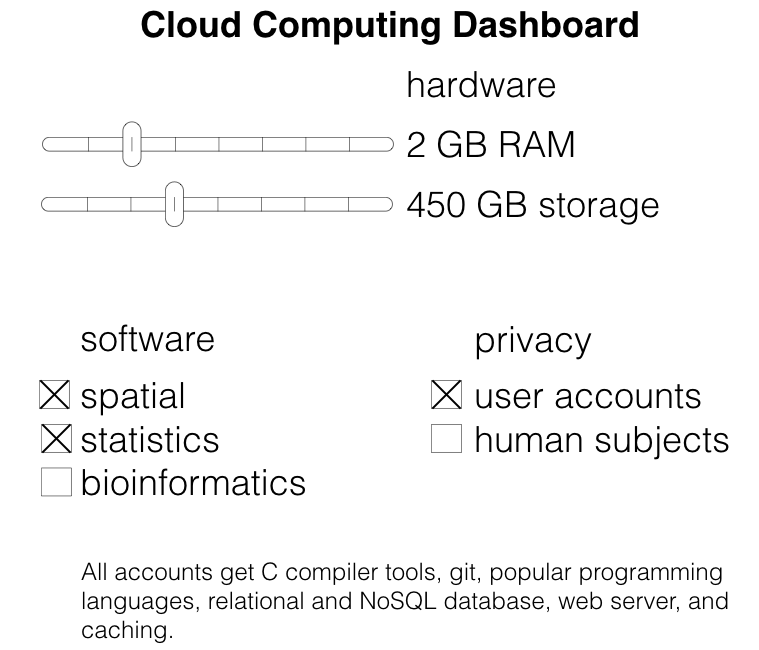

The researcher will build into her grant the funds for computing services at an institutionally negotiated rate. Once ready to start her project, she would fire up her computer and log on to the library services where she would be presented a dashboard. She would be able to click on a few buttons, adjust sliders, choose software and specify other project parameters (most important being the use of human subjects or classified data) that would ensure the right cloud instance configured for her. All the software toolchain, source control management, data archiving, advertising and discovery services, and a basic project website will be automatically bootstrapped. Data would be appropriately de-identified and encrypted, if required, and persistent IDs automatically generated. Free and open source versions of all software will be offered by default, and non-free/proprietary software will be installed only after proper justification. The library staff would have worked out with the computing services provider all necessary and proper protocols specific to the research project, so the researcher would not have to spend any time thinking of anything other than her research. She would be able to add other users, her staff, students and other team members to her computing resource with just a few clicks. The basic but adequate project website would serve as a gateway to all public output of the project under appropriate open licenses by default.

Is the above vision likely to become real? Most likely not. I believe there are too entrenched interests both from the library and the computing services sides, as well as old-fashioned thinking in the the research institutions themselves where there is both a desire to “own” resources on-campus and a distrust of commercial providers. But, it is fun to dream on.